WSL or “Windows Subsystem Linux”[1] is a feature in the Microsoft Windows ecosystem that allows users to run a real Linux environment directly inside Windows without needing a traditional virtual machine or dual boot setup. The latest version, WSL2, runs a lightweight virtualized Linux kernel for better compatibility and performance, making it especially useful for development, DevOps, and cybersecurity workflows where Linux tooling is essential but Windows remains the primary operating system. It was introduced a few years ago (2016) as part of Windows 10.

Category Archives: Security

Quick Howto: Extract URLs from RTF files, (Mon, Feb 9th)

Malicious RTF (Rich Text Format) documents are back in the news with the exploitation of CVE-2026-21509 by APT28.

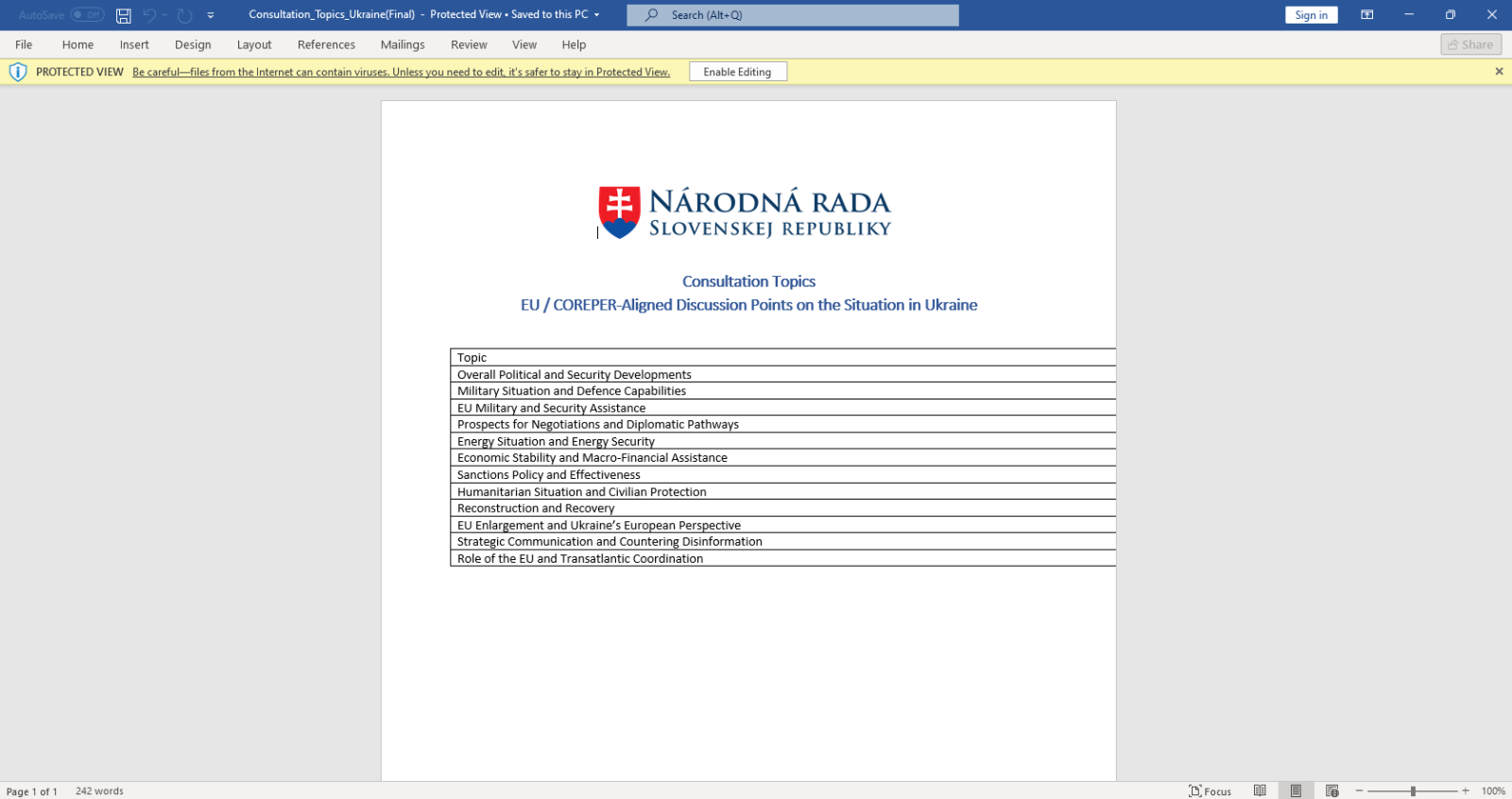

The malicious RTF documents BULLETEN_H.doc and Consultation_Topics_Ukraine(Final).doc mentioned in the news are RTF files (despite their .doc extension, a common trick used by threat actors).

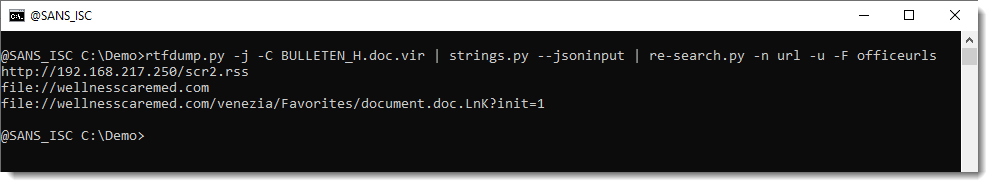

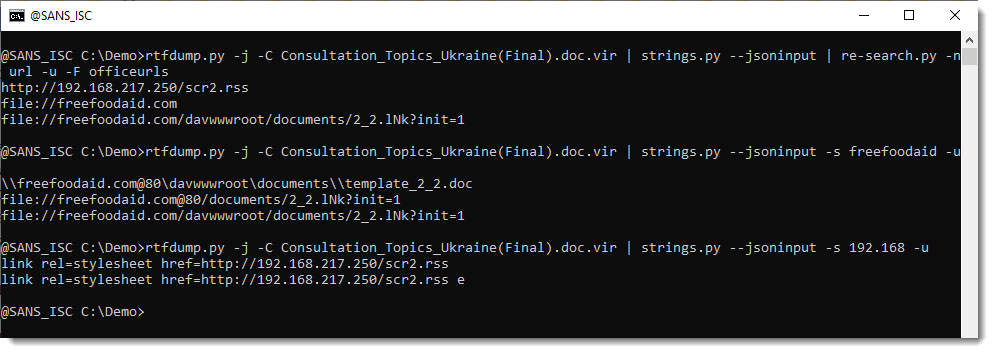

Here is a quick tip to extract URLs from RTF files. Use the following command:

rtfdump.py -j -C SAMPLE.vir | strings.py --jsoninput | re-search.py -n url -u -F officeurls

Like this:

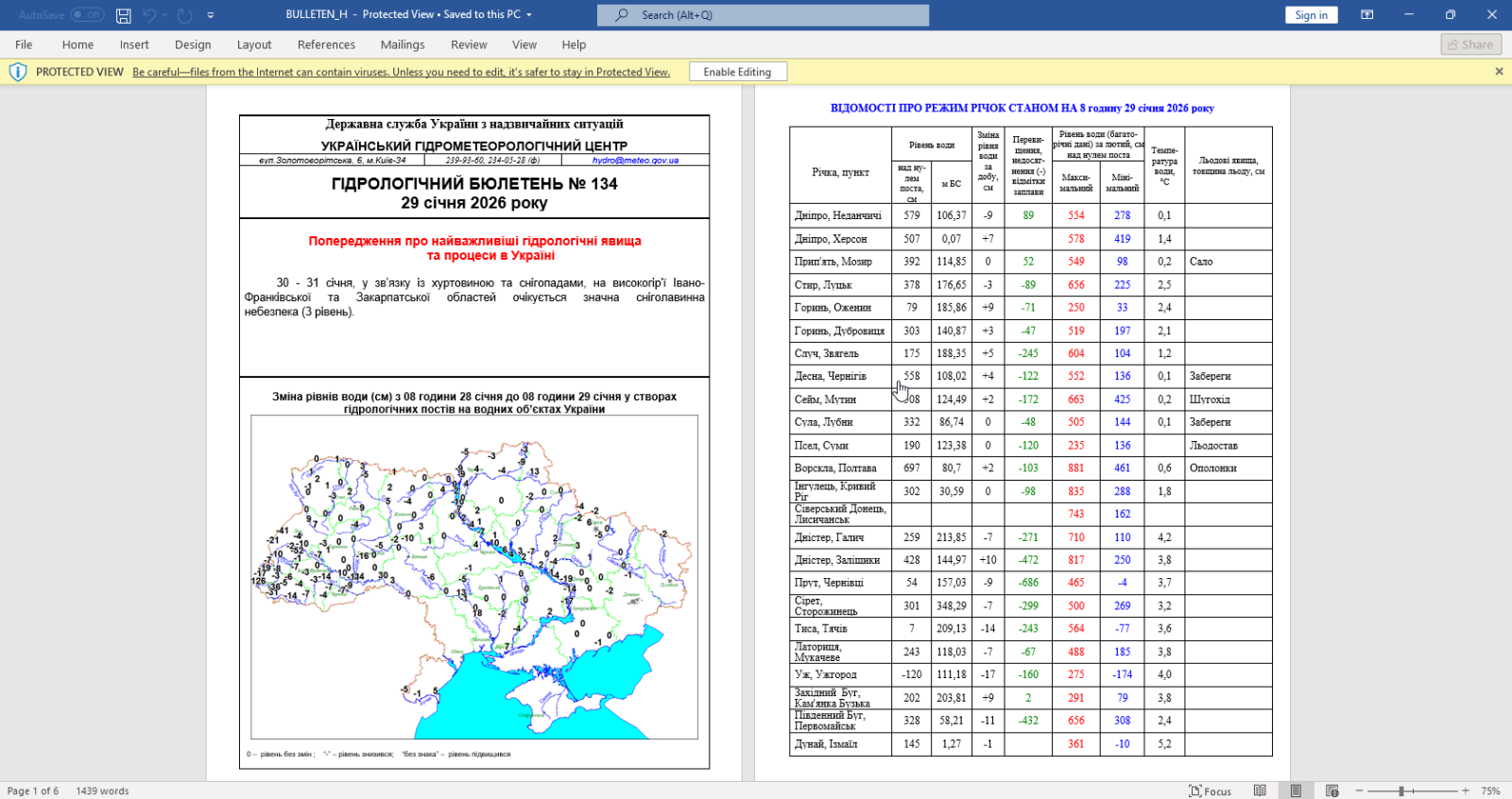

BTW, if you are curious, this is how that document looks like when opened:

Let me break down the command:

- rtfdump.py -j -C SAMPLE.vir: this parses RTF file SAMPLE.vir and produces JSON output with the content of all the items found in the RTF document. Option -C make that all combinations are included in the JSON data: the item itself, the hex-decoded item (-H) and the hex-decoded and shifted item (-H -S). So per item found inside the RTF file, 3 entries are produced in the JSON data.

- strings.py –jsoninput: this takes the JSON data produced by rtfdump.py and extract all strings

- re-search.py -n url -u -F officeurls: this extracts all URLs (-n url) found in the strings produced by strings.py, performs a deduplication (-u) and filters out all URLs linked to Office document definitions (-F officeurls)

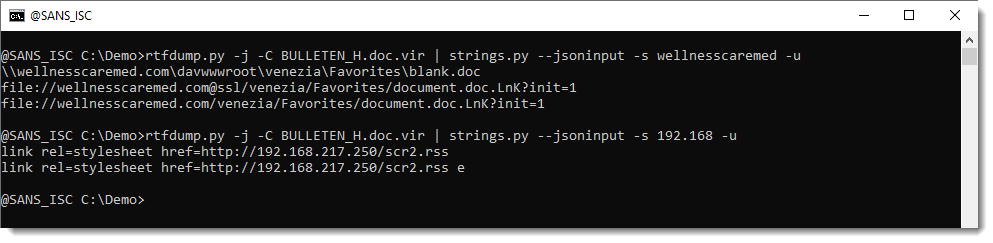

So I have found one domain (wellnesscaremed) and one private IP address (192.168…). What I then like to do, is search for these keywords in the string list, like this:

If found extra IOCs: a UNC and a "malformed" URL. The URL has it's hostname followed by @ssl. This is not according to standards. @ can be used to introduce credentials, but then it has to come in front of the hostname, not behind it. So that's not the case here. More on this later.

Here are the results for the other document:

Notice that this time, we have @80.

I believe that this @ notation is used by Microsoft to provide the portnumber when WebDAV requests are made (via UNC). If you know more about this, please post a comment.

In an upcoming diary, I will show how to extract URLs from ZIP files embedded in the objects in these RTF files.

Didier Stevens

Senior handler

blog.DidierStevens.com

(c) SANS Internet Storm Center. https://isc.sans.edu Creative Commons Attribution-Noncommercial 3.0 United States License.

Broken Phishing URLs, (Thu, Feb 5th)

Detecting and Monitoring OpenClaw (clawdbot, moltbot), (Tue, Feb 3rd)

Last week, a new AI agent framework was introduced to automate "live". It targets office work in particular, focusing on messaging and interacting with systems. The tool has gone viral not so much because of its features, which are similar to those of other agent frameworks, but because of a stream of security oversights in its design.

Scanning for exposed Anthropic Models, (Mon, Feb 2nd)

Yesterday, a single IP address (%%ip:204.76.203.210%%) scanned a number of our sensors for what looks like an anthropic API node. The IP address is known to be a Tor exit node.

The requests are pretty simple:

GET /anthropic/v1/models

Host: 67.171.182.193:8000

X-Api-Key: password

Anthropic-Version: 2023-06-01

It looks like this is scanning for locally hosted Anthropic models, but it is not clear to me if this would be successful. If anyone has any insights, please let me know. The API Key is a commonly used key in documentation, and not a key that anybody would expect to work.

At the same time, we are also seeing a small increase in requests for "/v1/messages". These requests have been more common in the past, but the URL may be associated with Anthropic (it is, however, somewhat generic, and it is likely other APIs use the same endpoint. These requests originate from %%ip:154.83.103.179%%, an IP address with a bit a complex geolocation and routing footprint.

—

Johannes B. Ullrich, Ph.D. , Dean of Research, SANS.edu

Twitter|

(c) SANS Internet Storm Center. https://isc.sans.edu Creative Commons Attribution-Noncommercial 3.0 United States License.

Google Presentations Abused for Phishing, (Fri, Jan 30th)

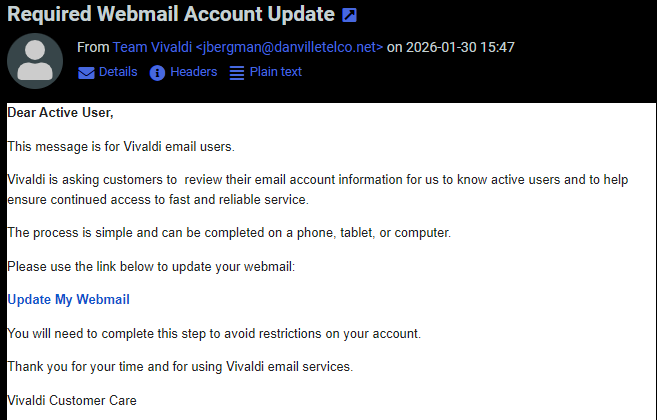

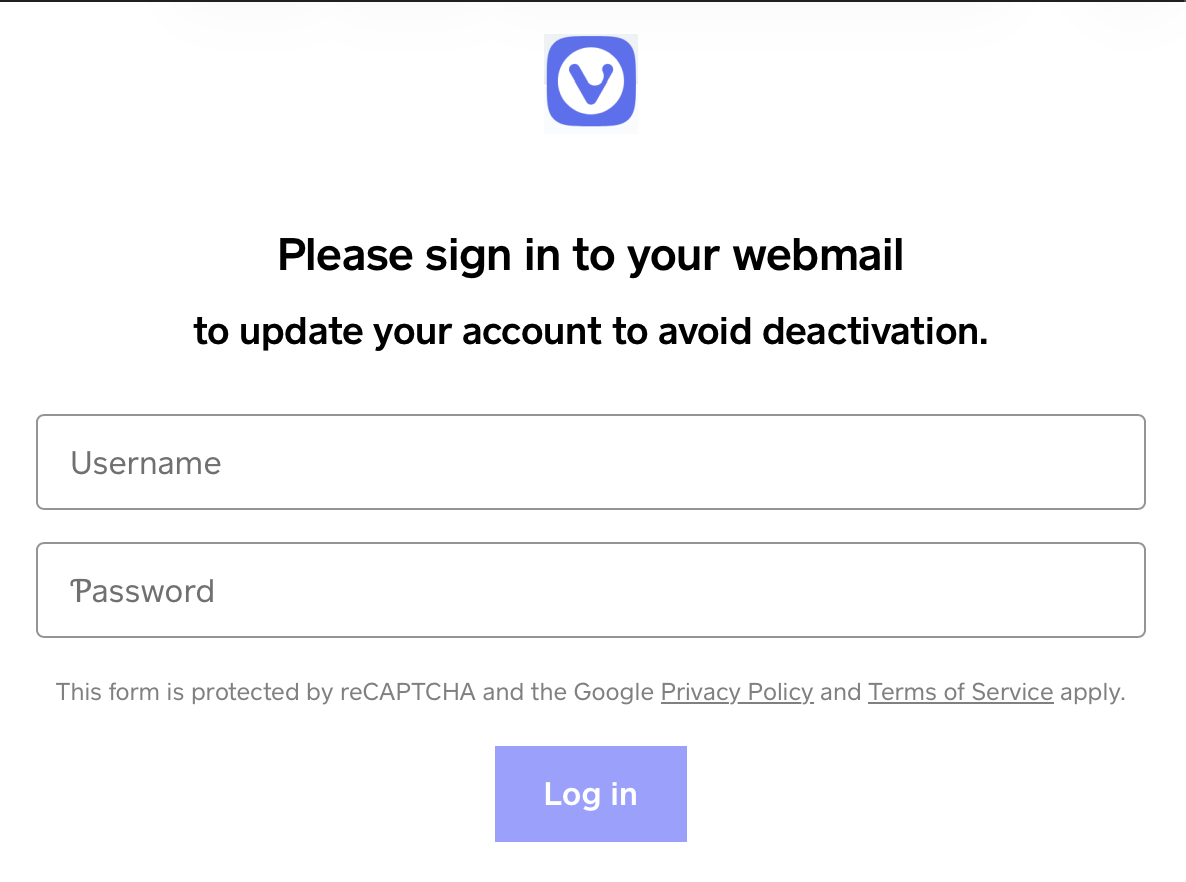

Charlie, one of our readers, has forwarded an interesting phishing email. The email was sent to users of the Vivladi Webmail service. While not overly convincing, the email is likely sufficient to trick a non-empty group of users:

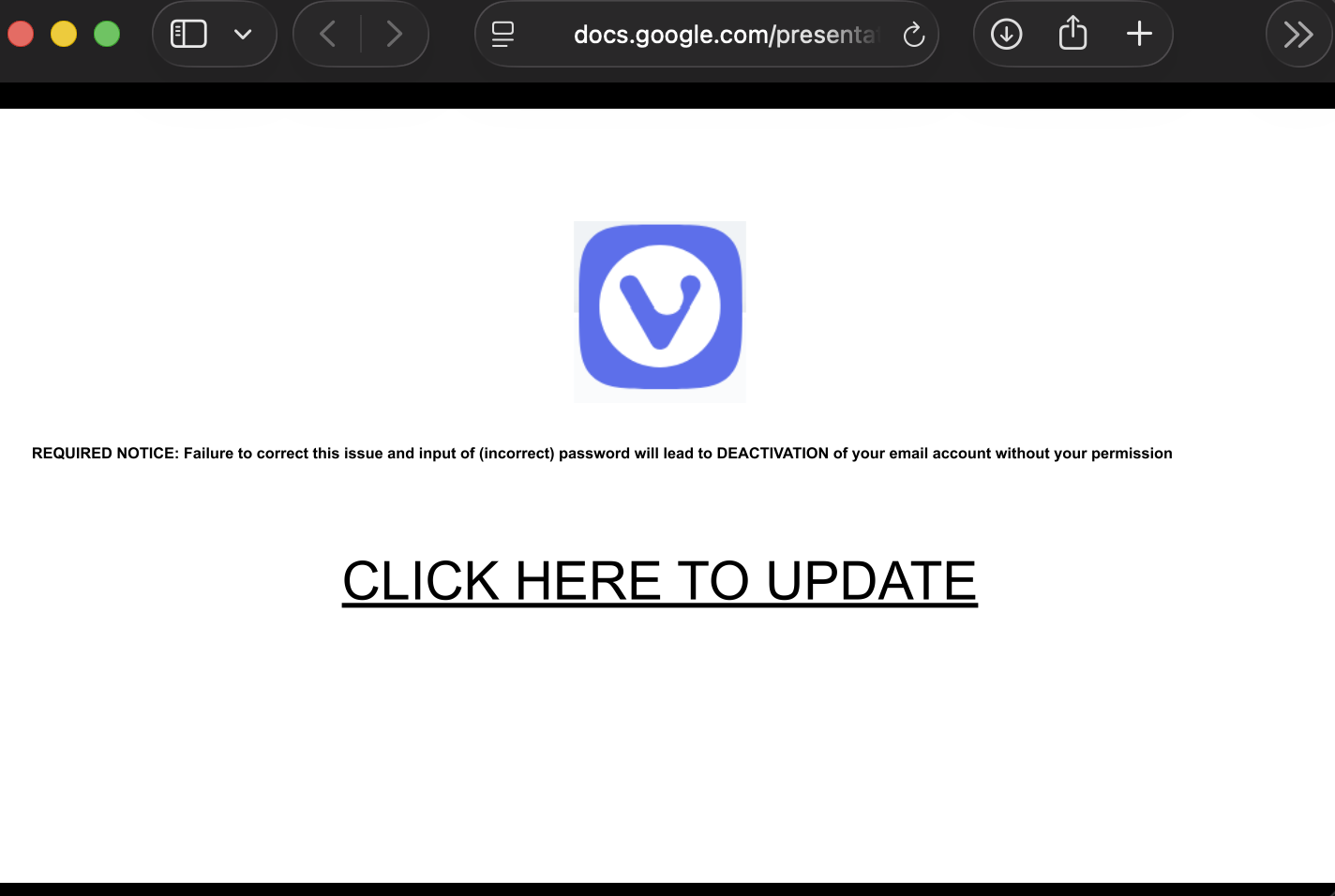

The e-mail gets more interesting as the user clicks on the link. The link points to Google Documents and displays a slide show:

Usually, Google Docs displays a footer notice that warns viewers about phishing sites and offers a "reporting" link if a page is used for phishing. Bots are missing in this case. At first, I suspected some HTML/JavaScript/CSS tricks, but it turns out that this isn't a bug; it is a feature!

Usually, if a user shares slides, the document opens in an "edit" window. This can be avoided by replacing "edit" with "preview" in the URL, but the footer still makes it obvious that this is a set of slides. To remove the footer, the slides have to be "published," and the resulting link must be shared. When publishing, the slides will auto-advance. But for a one-slide slideshow, this isn't an issue. There is also a setting to delay the advance. Here are some sample links:

[These links point to a simple sample presentation I created, not the phishing version.]

Normal Share:

https://docs.google.com/presentation/d/1Quzd6bbuPlIcTOorlUDzSuJCXiOyqBTSHczo6hnXcac/edit?usp=sharing

Preview Share:

https://docs.google.com/presentation/d/1Quzd6bbuPlIcTOorlUDzSuJCXiOyqBTSHczo6hnXcac/preview?usp=sharing

Publish Share:

https://docs.google.com/presentation/d/e/2PACX-1vRaoBusJAaIoVcNbGsfVyE0OuTP1dS-2Po9lpAN9GGy2EkbZG_oR9maZDS7cq2xW_QeiF8he457hq3_/pub?start=false&loop=false&delayms=30000

The URL parameters in the last link do not start the presentations, nor loop them, and delay the next slide by 30 seconds.

The Vivaldi webmail phishing ended up on a "classic" phishing login form that was created using Square. So far, this form is still visible at

hxxps [:] //vivaldiwebmailaccountsservices[.]weeblysite[.]com

???????

???????

—

Johannes B. Ullrich, Ph.D. , Dean of Research, SANS.edu

Twitter|

(c) SANS Internet Storm Center. https://isc.sans.edu Creative Commons Attribution-Noncommercial 3.0 United States License.

Odd WebLogic Request. Possible CVE-2026-21962 Exploit Attempt or AI Slop?, (Wed, Jan 28th)

I was looking for possible exploitation of CVE-2026-21962, a recently patched WebLogic vulnerability. While looking for related exploit attempts in our data, I came across the following request:

GET /weblogic//weblogic/..;/bea_wls_internal/ProxyServlet

host: 71.126.165.182

user-agent: Mozilla/5.0 (compatible; Exploit/1.0)

accept-encoding: gzip, deflate

accept: */*

connection: close

wl-proxy-client-ip: 127.0.0.1;Y21kOndob2FtaQ==

proxy-client-ip: 127.0.0.1;Y21kOndob2FtaQ==

x-forwarded-for: 127.0.0.1;Y21kOndob2FtaQ==

According to write-ups about CVE-2026-21962, this request is related [2]. However, the vulnerability also matched an earlier "AI Slop" PoC [3][4]. Another write-up, that also sounds very AI-influenced, suggests a very different exploit mechanism that does not match the request above [5].

The source IP is 193.24.123.42. Our data shows sporadic HTTP scans for this IP address, and it appears to be located in Russia. Not terribly remarkable at that. In the past, the IP has used the "Claudbot" user-agent. But it does not have any actual affiliation with Anthropic (not to be confused with the recent news about clawdbot).

The exploit is a bit odd. First of all, it does use the loopback address as an "X-Forwarded-For" address. This is a common trick to bypass access restrictions (I would think that Oracle is a bit better than to fall for a simple issue like that). There is an option to list multiple IPs, but they should be delimited by a comma, not a semicolon.

The base64 encoded string decodes to: "cmd:whoami". This suggests a simple command injection vulnerability. Possibly, the content of the header is base64 decoded and next, passed as a command line argument?? Certainly an odd mix of encodings in one header, and unlikely to work.

Let's hope this is AI slop and the exploit isn't that easy. We have seen a significant uptick in requests, including the wl-proxy-client-ip header, starting on January 21st, but the header has been used before. It is a typical exploit AI may come up with, seeing keywords like "Weblogic Server Proxy Plug-in".

I asked ChatGPT and Grok if this is an exploit or AI slop. The abbreviated answer:

ChatGPT: "This looks more like a “scanner/probe that’s trying to look like an exploit” than a complete, working exploit by itself — but it’s not random either. It’s borrowing real WebLogic attack ingredients."

Grok: "This is an actual exploit attempt — not just random "AI slop" or nonsense traffic."

Google Gemini: "That is definitely an actual exploit attempt, not AI slop. Specifically, it is targeting a well-known vulnerability in Oracle WebLogic Server."

[1] https://nvd.nist.gov/vuln/detail/CVE-2026-21962

[2] https://dbugs.ptsecurity.com/vulnerability/PT-2026-3709

[3] https://x.com/0xacb/status/2015473216844620280

[4] https://github.com/Ashwesker/Ashwesker-CVE-2026-21962/blob/main/CVE-2026-21962.py

[5] https://www.penligent.ai/hackinglabs/the-ghost-in-the-middle-a-definitive-technical-analysis-of-cve-2026-21962-and-its-existential-threat-to-ai-pipelines/

—

Johannes B. Ullrich, Ph.D. , Dean of Research, SANS.edu

Twitter|

(c) SANS Internet Storm Center. https://isc.sans.edu Creative Commons Attribution-Noncommercial 3.0 United States License.

Initial Stages of Romance Scams [Guest Diary], (Tue, Jan 27th)

[This is a Guest Diary by Fares Azhari, an ISC intern as part of the SANS.edu BACS program]

Romance scams are a form of social-engineering fraud that causes both financial and emotional harm. They vary in technique and platform, but most follow the same high-level roadmap: initial contact, relationship building, financial exploitation. In this blog post I focus on the initial stages of the romance scam ? how scammers make contact, build rapport, and prime victims for later financial requests.

I was contacted by two separate romance scammers on WhatsApp. I acted like a victim falling for their scam and spent around two weeks texting each one. This allowed me to observe the first few phases, which we discuss below. I was not able to reach the monetization phase, as that often takes months and I could not maintain the daily time investment needed to convince the scammers I was fully falling for it.

The scammers claimed to be called ?Chloe? and ?Verna?. We use these names throughout to differentiate their messages. Snippets from each are included to illustrate the phases, along with my precursor or response messages.

Phase 1: Initial contact

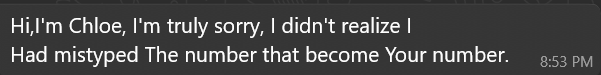

Both conversations began the same way ? the sender claimed they had messaged the wrong person.

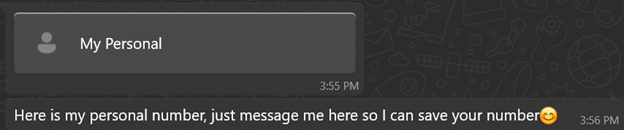

Verna:

Chloe:

That ?wrong-number? ruse is low effort and high reward. It gives the out-of-the-blue message a plausible reason, invites a short helpful reply, and lowers suspicion. Two small but useful fingerprints appear immediately: random capitalization and awkward grammar. These recur later and help identify when different operators are involved.

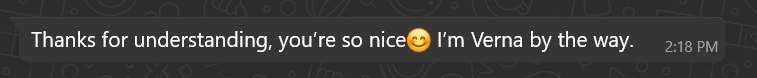

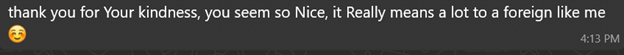

Phase 2: The immediate hook

If you reply politely, the scammer usually responds with an over-the-top compliment:

Verna:

Chloe:

These short flattering lines serve as rapid rapport builders ? they feel personal and disarming.

Phase 3: Establishing identity and credibility

After a few messages, both claimed to be foreigners working in the UK:

Verna:

When asked what she does for a living:

When asked to explain her job:

Chloe:

When asked how COVID affected her life:

When asked about her job:

When asked what made her choose business:

Both claim the same job ? Business Analyst ? which later supports credibility when discussing investments. Claiming to be foreigners explains grammatical errors and factual mistakes about the UK. Notably, job descriptions are long and well-written, lacking earlier quirks ? suggesting prewritten, copy-pasted content. This points to a playbook: flatter the target, establish credibility with occupation and location cover, then use scripted replies where legitimacy matters.

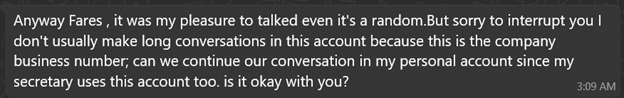

Phase 4: The hand-off

After a few days of texting, both explained they were using a business number and asked to move to a ?personal? one:

Verna:

After I said it didn?t bother me to switch:

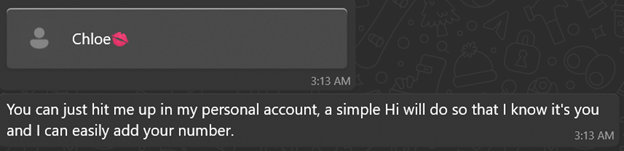

Chloe:

After the switch:

The excuse is plausible and low-friction. Once texting the new number, writing style often changes ? a strong sign of a hand-off to a different operator or team focused on long-term grooming.

Phase 5: The grooming phase (signs of a different operator)

The writing style shift is clear on the new numbers:

Verna:

When asked if she made friends at work:

When asked to share a steak recipe:

Chloe:

When asked what languages she speaks:

When asked about her studies:

When asked about work stress:

Responses show weaker English: more basic grammar errors, shorter sentences, quicker replies, daily ?Good morning? routines, and frequent (likely stolen or AI-generated) photos. These changes strongly indicate a hand-off.

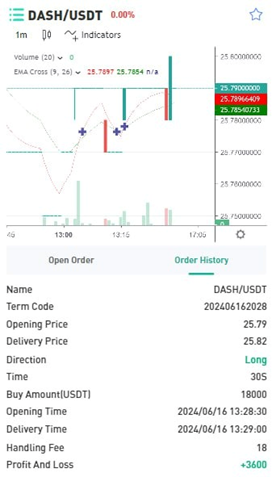

Phase 6: Credibility building

By the second week both began describing financial success and sent images of cars, apartments, gym visits, and meals to build trust:

Verna:

Pictures sent when asked about her side hustle:

When asked if investments are high risk:

When asked how she chooses investments:

Photo sent saying she finished work (face covered):

Chloe:

When asked about plans for her 30s:

When asked about foundations/programs:

Property photo (Australia):

Both positioned themselves as successful investors with diversified portfolios ? building trust for future proposals. The wealth, charity, and expertise narratives emotionally prime the target. Direct money requests usually come much later, after deep emotional commitment.

Practical advice for readers

- If you receive a random ?wrong number? message, be cautious ? do not share personal information.

- Be suspicious if someone quickly asks to move off-platform or to a new number. Stay on the original platform until identity is verified.

- Ask for a live video call ? repeated refusal is a major red flag.

- Reverse-image search any profile photos or images received.

- Never send money, gift cards, or personal documents to someone you only know online.

(c) SANS Internet Storm Center. https://isc.sans.edu Creative Commons Attribution-Noncommercial 3.0 United States License.

Scanning Webserver with /$(pwd)/ as a Starting Path, (Sun, Jan 25th)

Based on the sensors reporting to ISC, this activity started on the 13 Jan 2026. My own sensor started seeing the first scan on the 21 Jan 2026 with limited probes. So far, this activity has been limited to a few scans based on the reports available in ISC [5] (select Match Partial URL and Draw):

Is AI-Generated Code Secure?, (Thu, Jan 22nd)

The title of this diary is perhaps a bit catchy but the question is important. I don’t consider myself as a good developer. That’s not my day job and I’m writing code to improve my daily tasks. I like to say “I’m writing sh*ty code! It works for me, no warranty that it will for for you”. Today, most of my code (the skeleton of the program) is generated by AI, probably like most of you.