In my last Week in Review post, I shared what I’d been hearing from customers in the AI-Driven Development Lifecycle (AI-DLC) workshops I’ve been delivering. Last week I was back at it, this time in Denver for a two-day AI-DLC workshop, where I helped facilitate 17 teams to deliver nearly 20 separate use cases in just two days. The pace of acceleration that AI-DLC unlocks—especially when paired with tools like Claude Code on Amazon Bedrock—is fundamentally changing how businesses operate. Traditional roles within software development teams are collapsing into smaller, AI-augmented squads, and the paradigm shift is beginning to take place right in front of us. To learn more about how to utilize various AI tools, visit the GitHub repository of AI-DLC workflow.

This shift is also reshaping how AWS account teams (solutions architects, customer solutions managers, and technical account managers) collaborate with customers. It’s becoming less about handing off advisory design documents and more about building alongside them in real time. It’s a genuinely exciting moment to be in the middle of the change, and this week’s headline launch — Anthropic’s most capable model yet, now on AWS — is going to push that pace even further.

Now, let’s get into this week’s AWS news…

Headlines

Claude Opus 4.8 on AWS — Anthropic’s most capable generally available model is now accessible through both Amazon Bedrock and the Claude Platform on AWS. Opus 4.8 is built for agentic coding, knowledge work, and extended autonomous task execution — it sustains longer autonomous sessions with deeper reasoning, recovers from errors, and synthesizes information across lengthy documents. For coding workloads, it reads codebases like an engineer, plans before it edits, and holds context across long sessions. On Amazon Bedrock, you get AWS-managed features like Guardrails, Knowledge Bases, and data residency; on the Claude Platform on AWS, you get Anthropic’s native APIs unified with AWS billing. To learn more, visit the deep-dive blog post.

Last week’s launches

Here are some launches and updates from this past week that caught my attention:

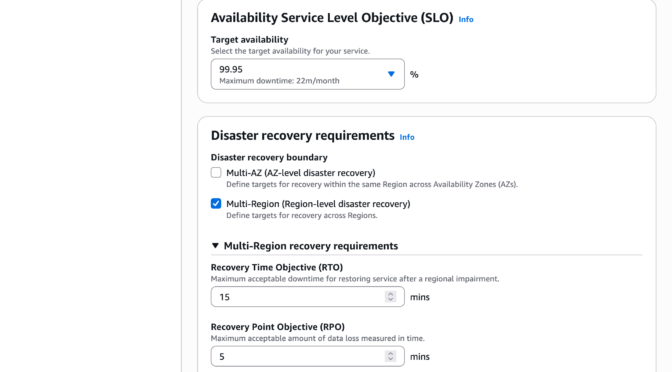

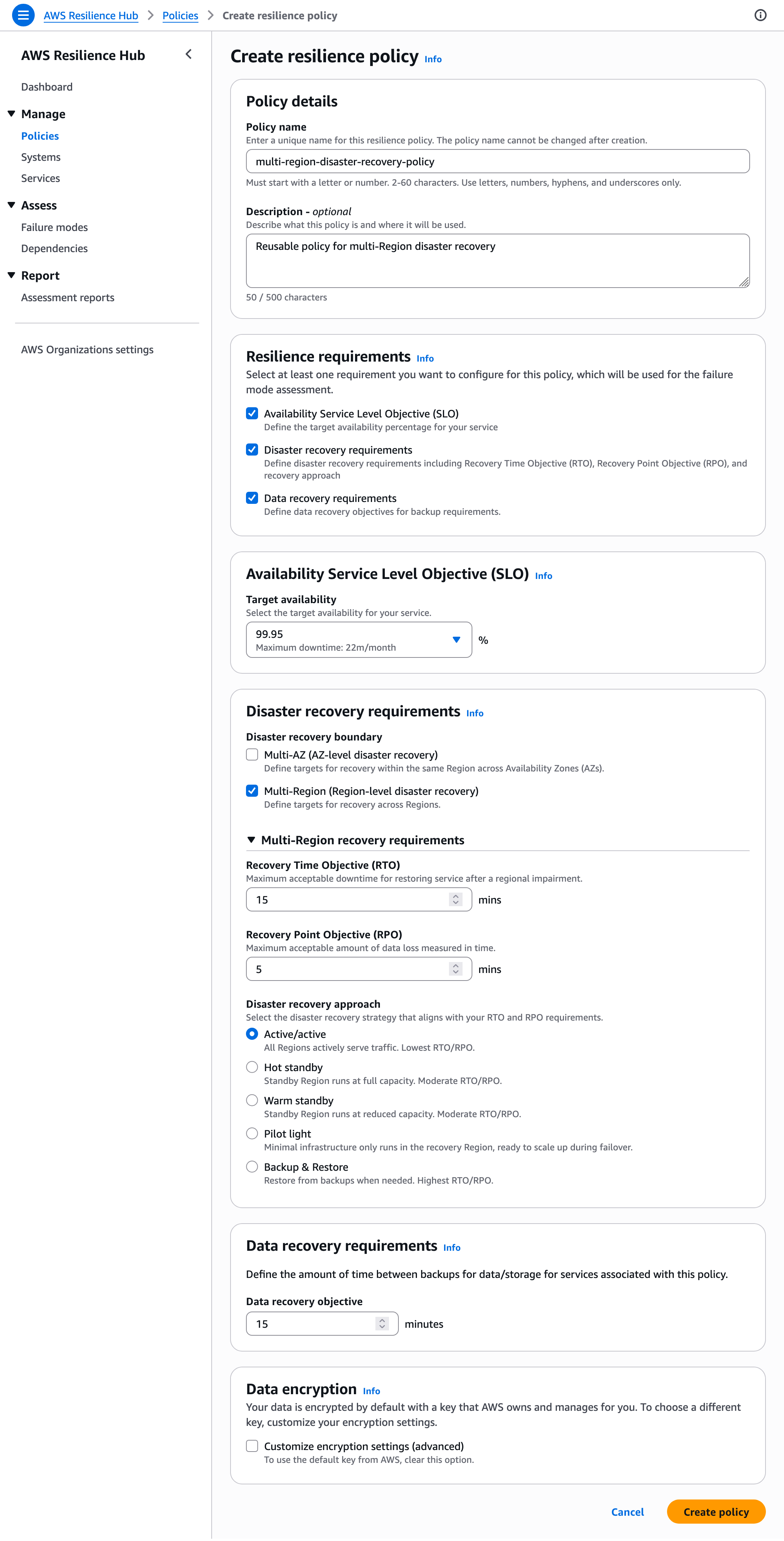

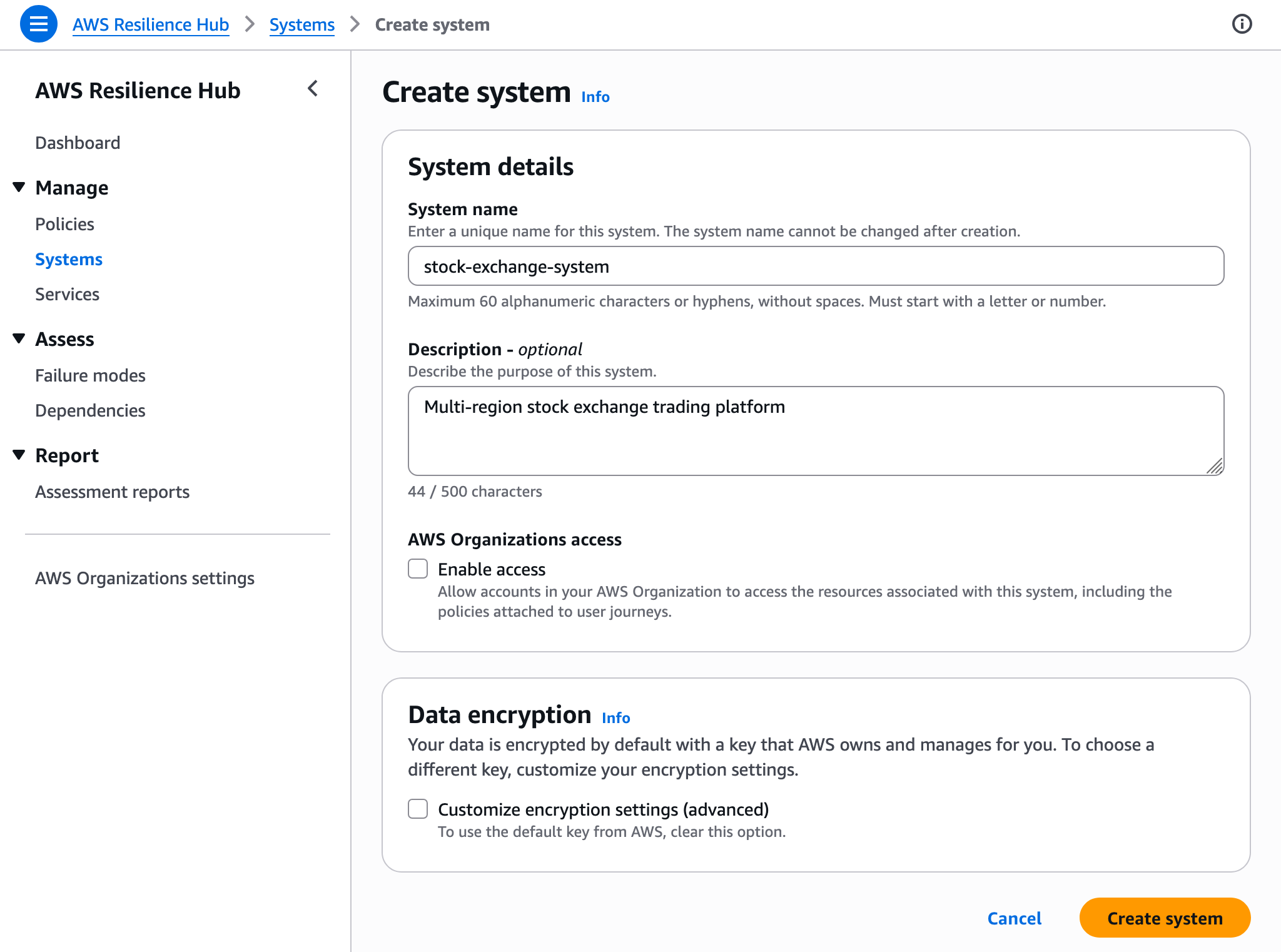

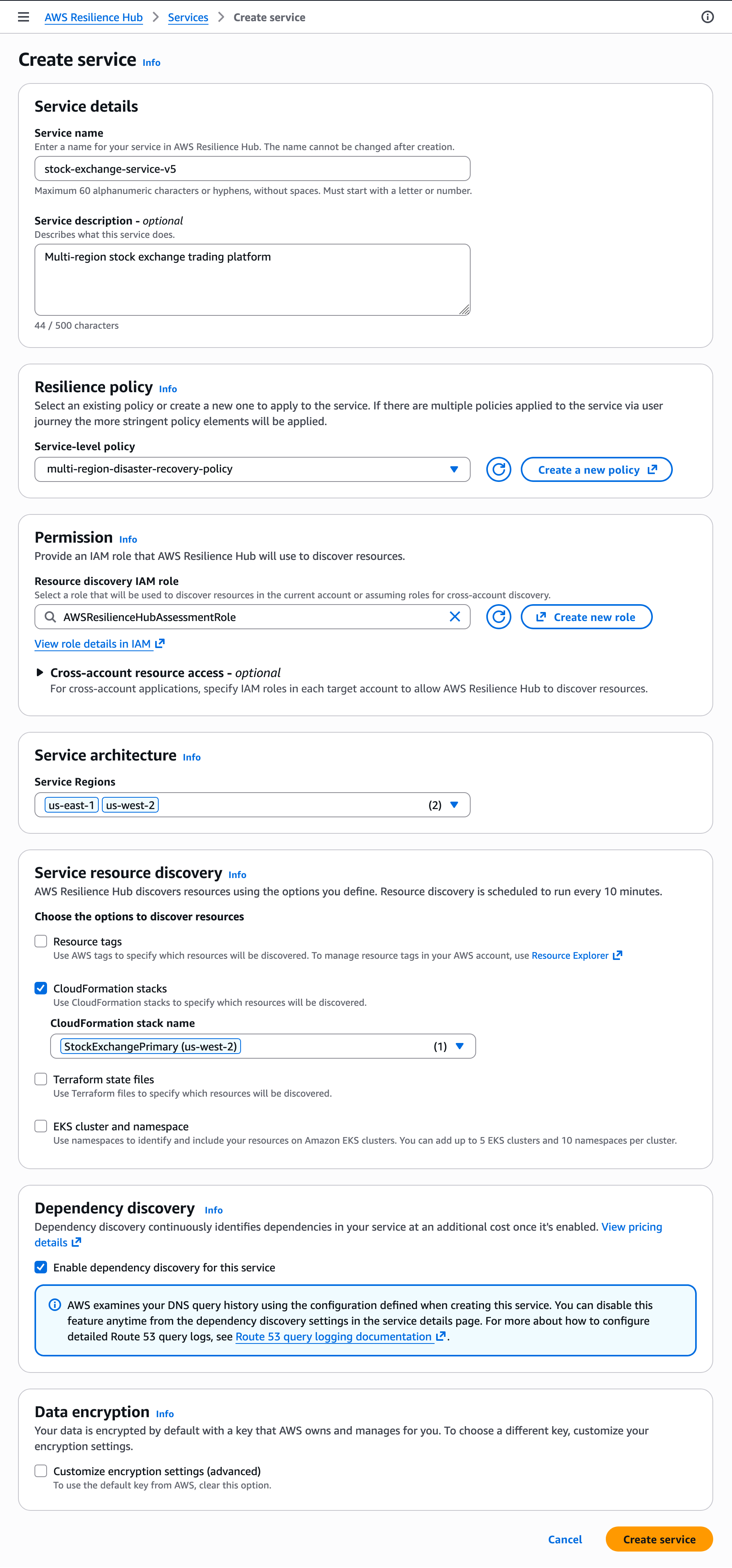

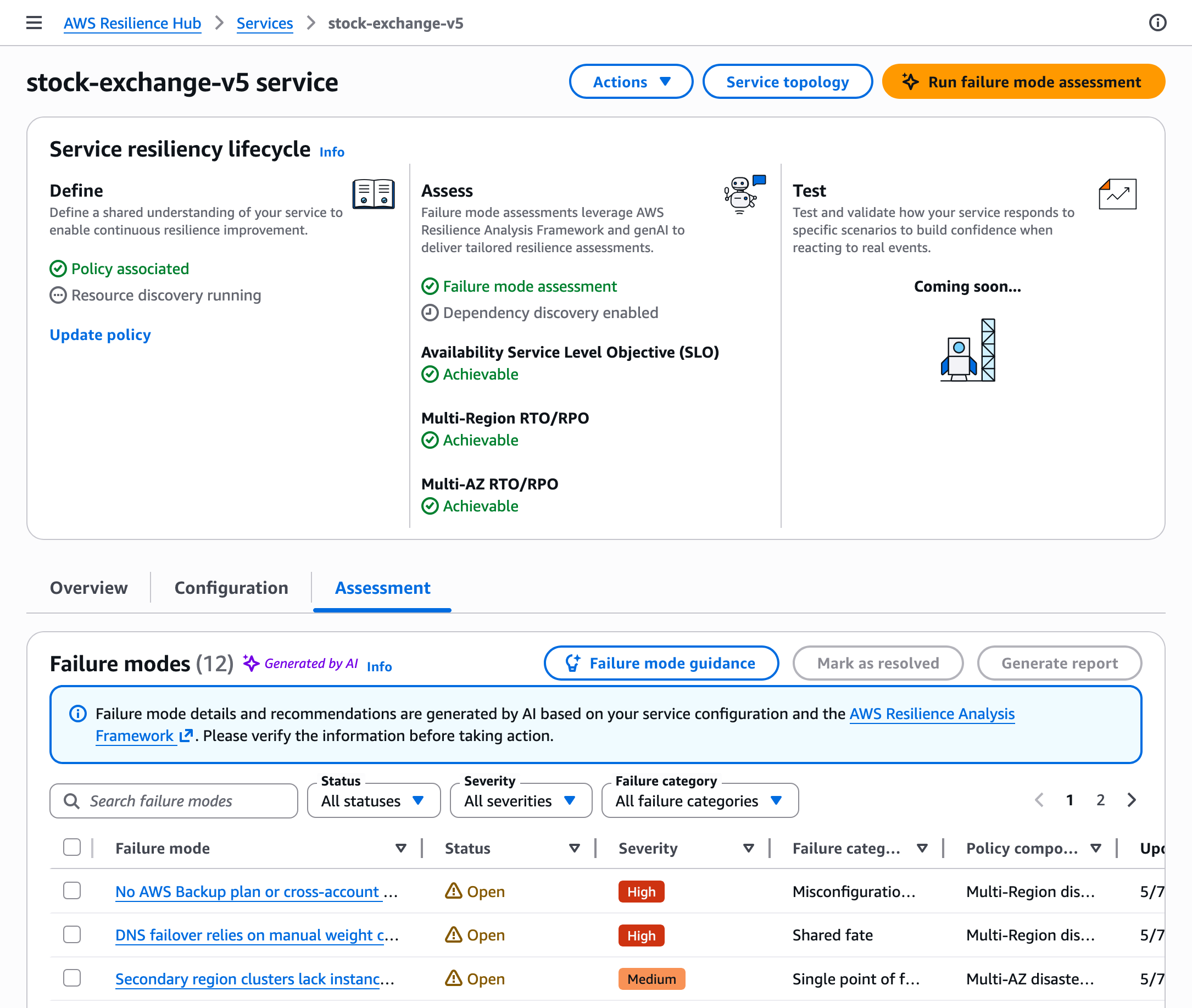

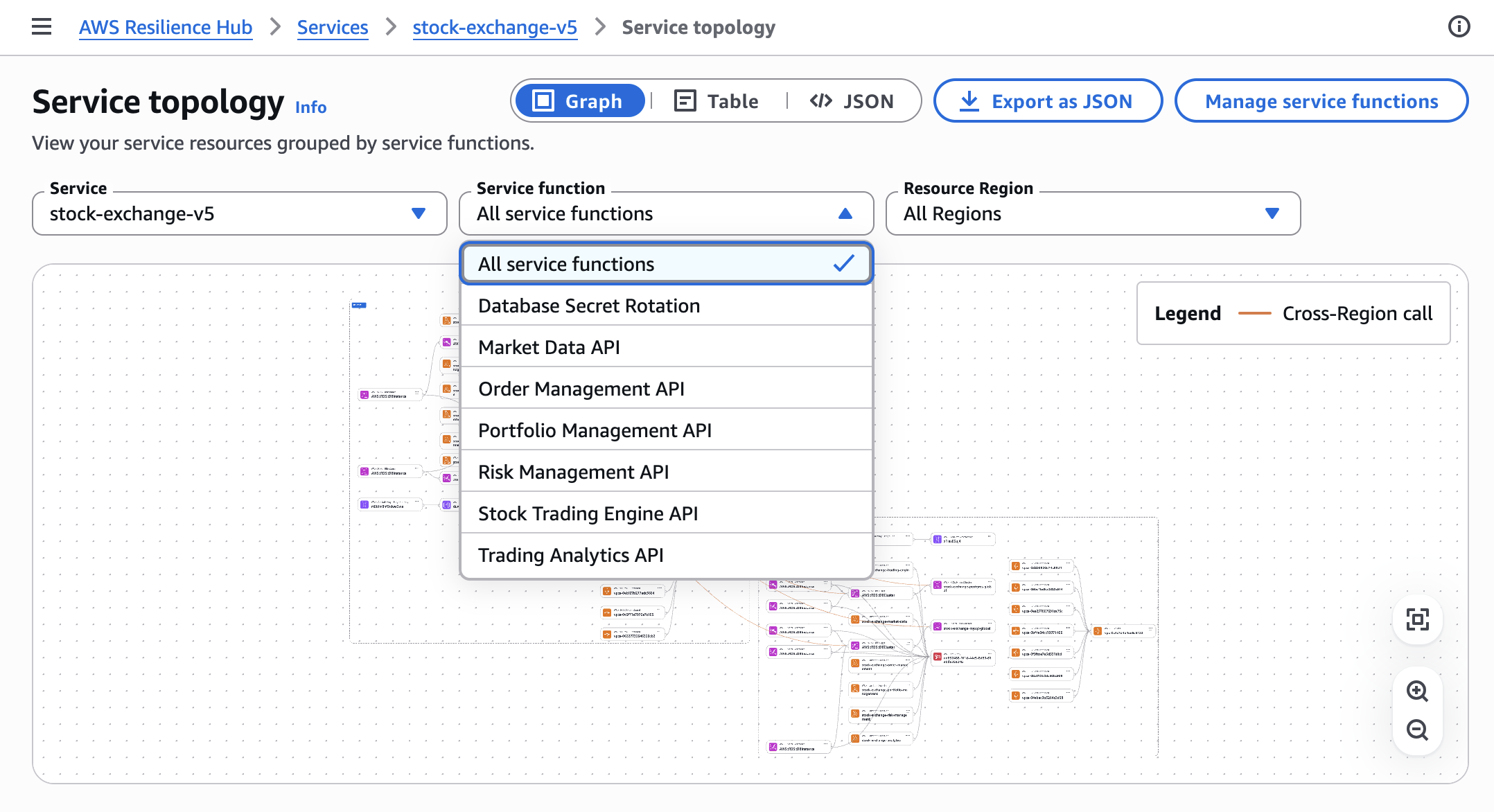

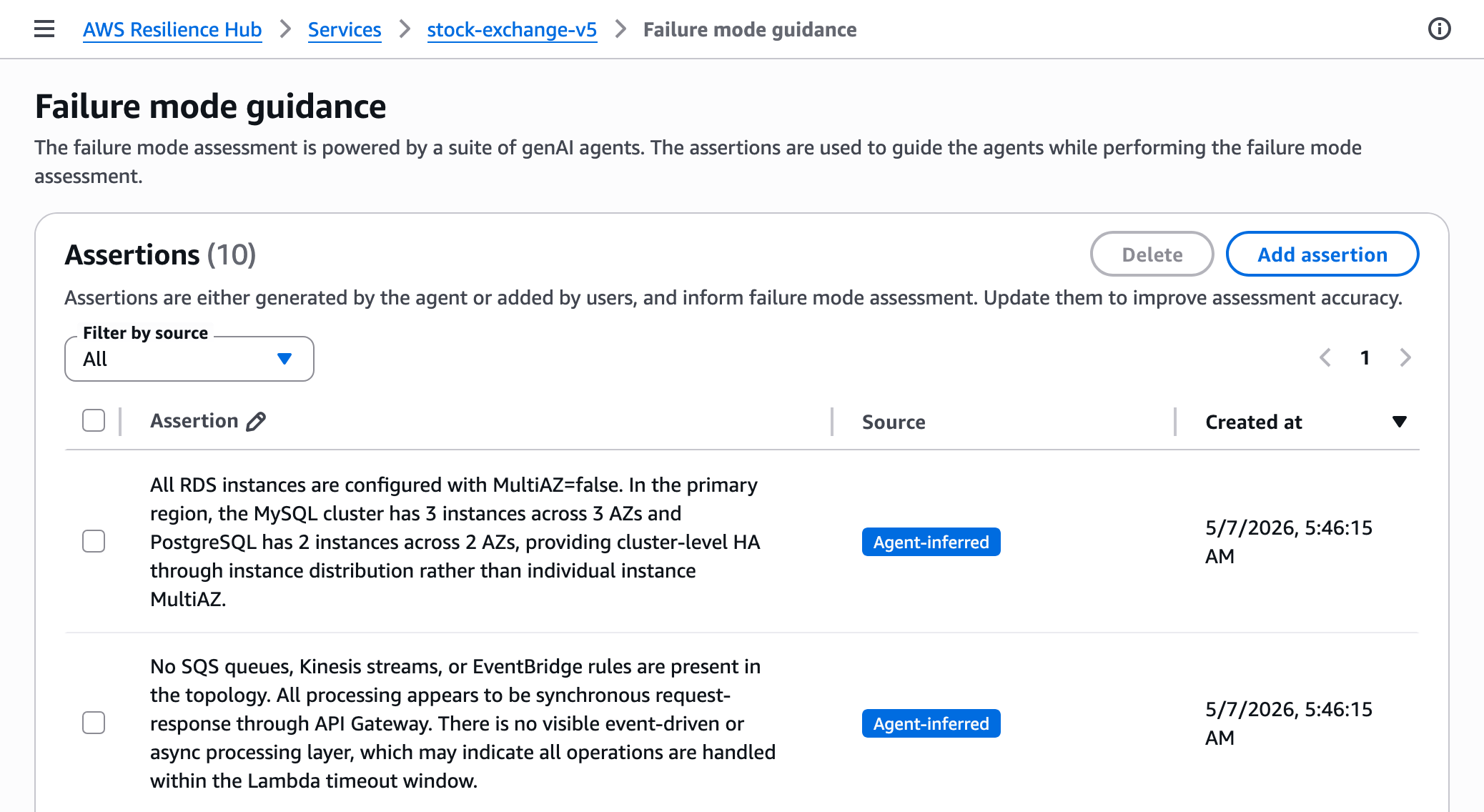

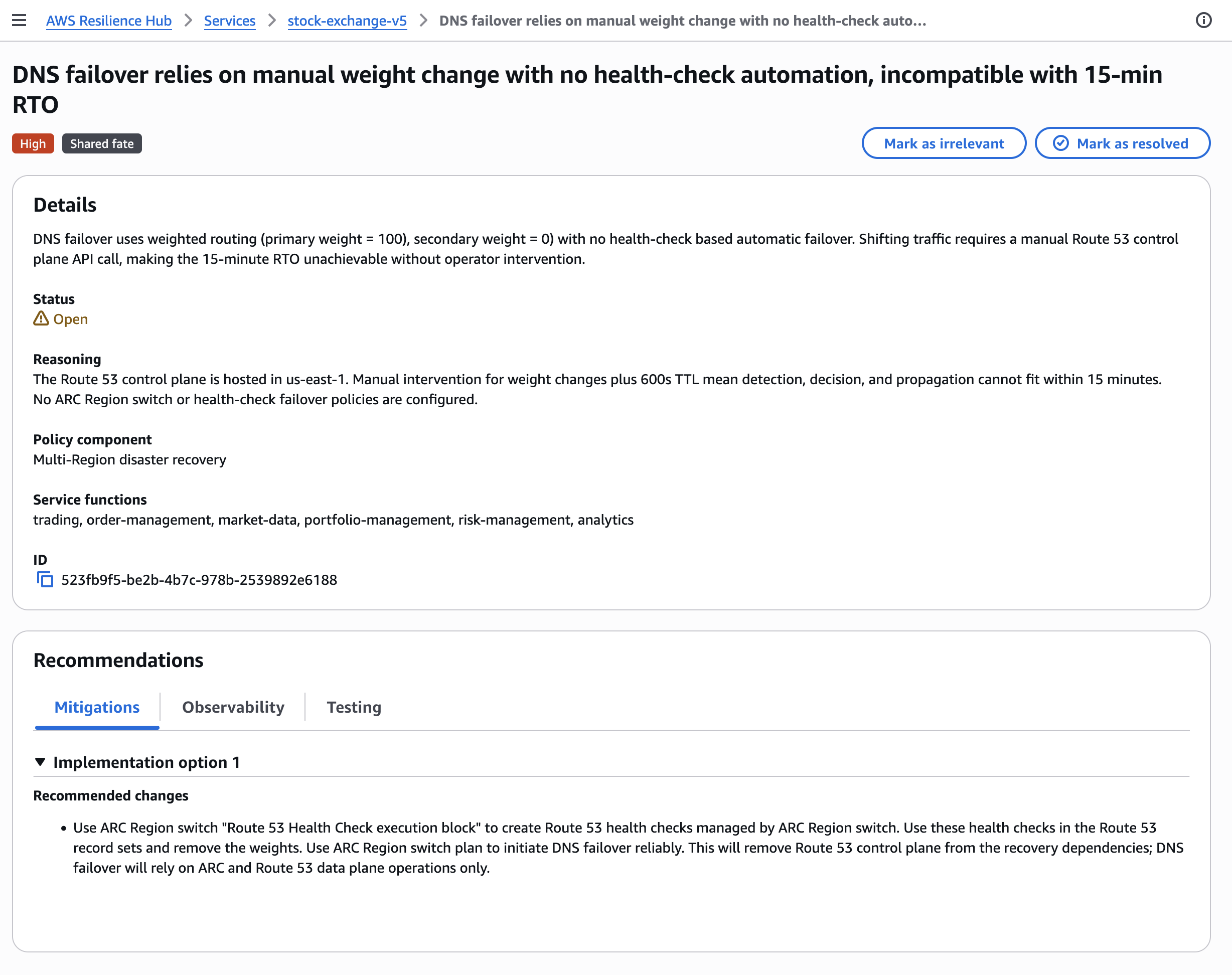

- Introducing the next generation of AWS Resilience Hub — A reimagined Resilience Hub gives SREs and developers a unified framework to define resilience standards, evaluate applications against them, and demonstrate compliance across an entire portfolio. It introduces modular resilience policies (covering service-level objectives (SLOs), multi-AZ/Region DR, and data recovery), business-oriented application modeling, generative AI-powered assessments aligned with the Well-Architected and Resilience Analysis Frameworks, and automatic dependency discovery via DNS query log analysis. Integration with AWS Organizations enables organization-wide resilience management from a single delegated administrator account.

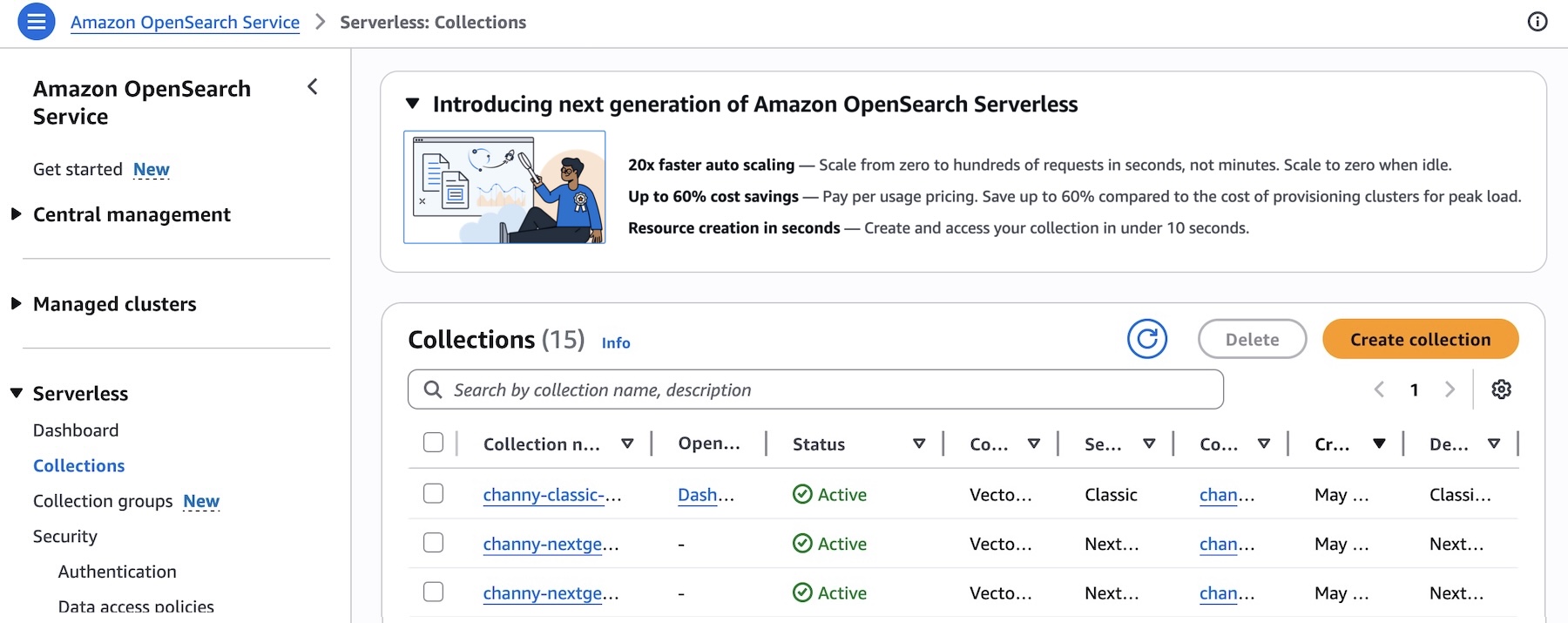

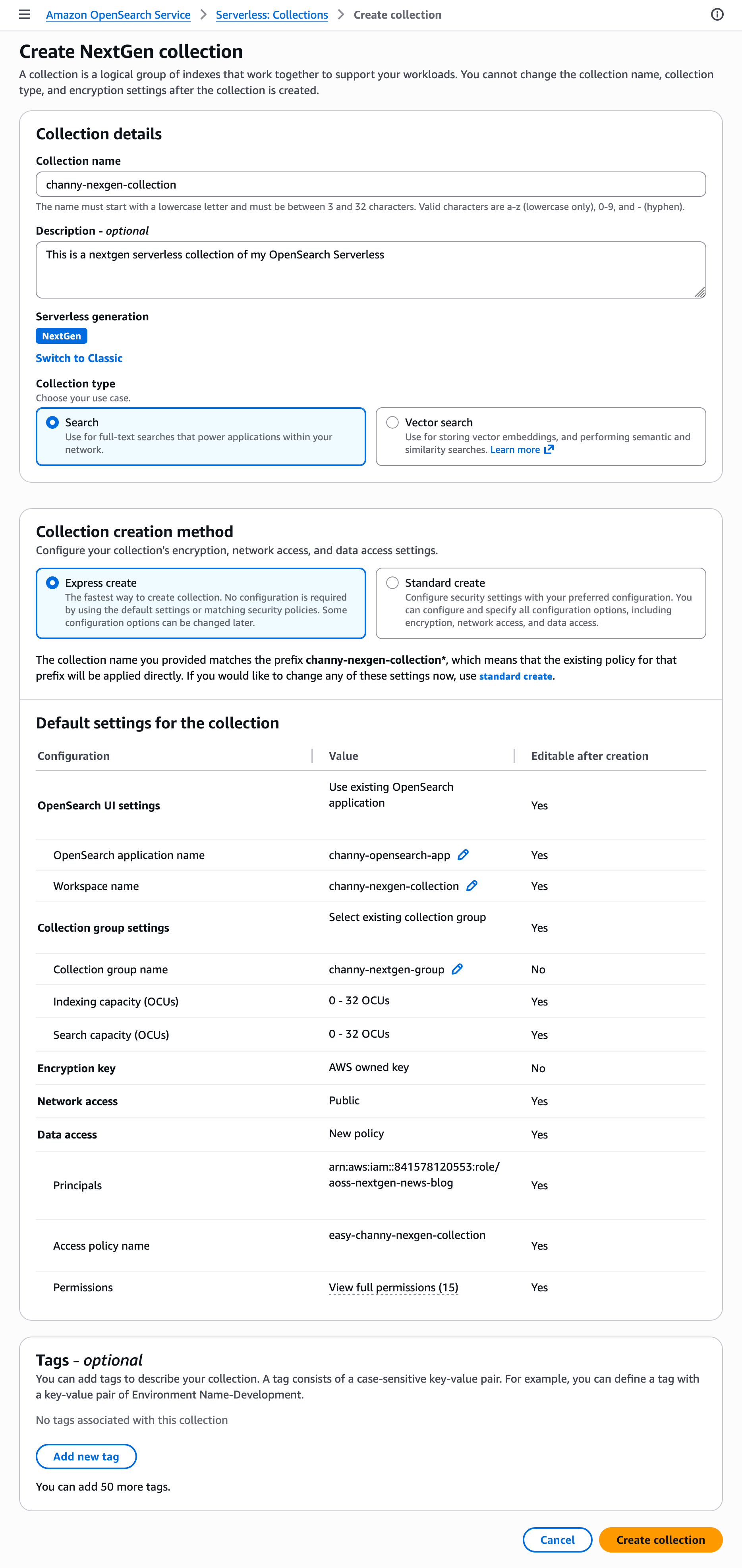

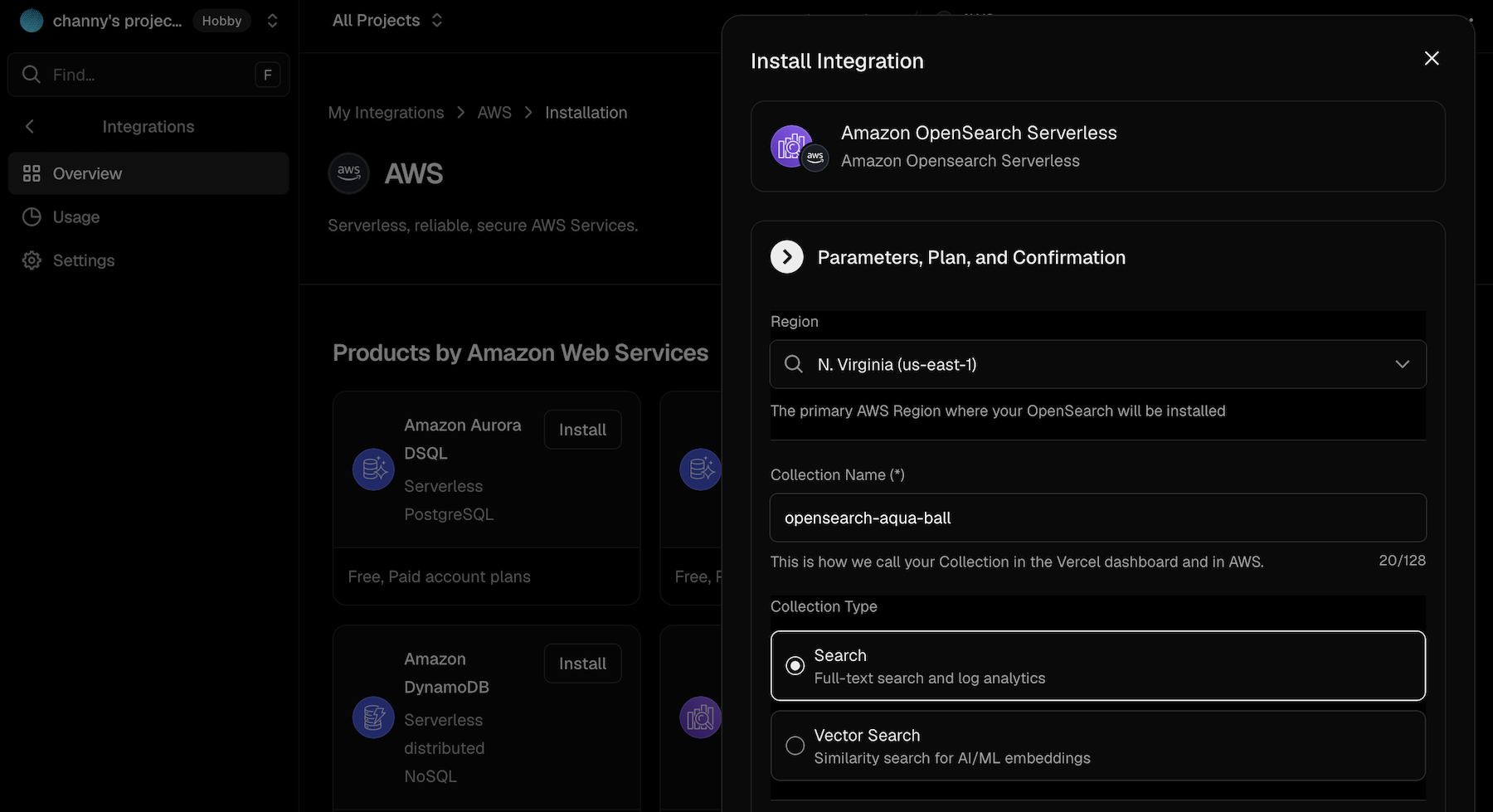

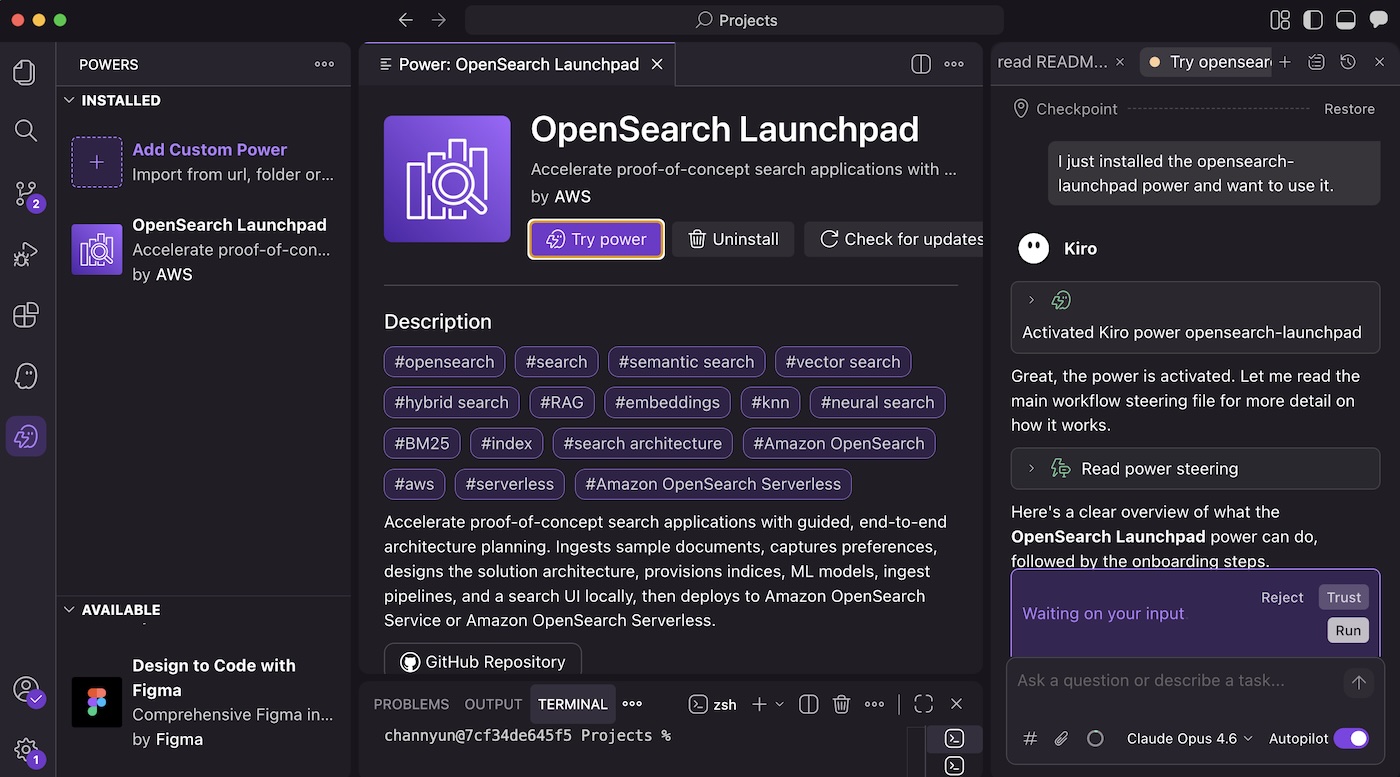

- Introducing the next generation of Amazon OpenSearch Serverless for building agentic AI applications — Amazon OpenSearch Serverless is now a fully managed search and vector engine purpose-built for agentic AI applications. It scales from zero to thousands of requests per second—roughly 20x faster than the prior generation—delivers up to 60% cost savings versus peak-provisioned clusters, and adds GPU acceleration plus new SEARCH and VECTORSEARCH collection types. Native integrations with Vercel, Kiro, Claude Code, and Cursor through OpenSearch Agent Skills make it straightforward to plug into your agent stack.

- New assessment capabilities in AWS Transform — AWS Transform expands with new tools to help you build migration business cases and evaluate TCO before moving workloads to AWS. You can ingest data from RVTools exports, CMDB data, the AWS Transform discovery tool, and third-party discovery tools, then run what-if scenarios across region, utilization, and service mapping for EC2, FSx, S3, SQL Server on EC2, and virtual desktops. The release also adds Agentic Readiness Analysis (ARA) and Modernization Analysis (MODA), which scan code repositories in 5 to 30 minutes per repo to surface severity-tagged findings with file-level evidence and AWS-mapped remediation guidance.

- Amazon Aurora MySQL with Kiro Powers — Aurora MySQL now integrates with Kiro Powers, drawing from a curated repository of pre-packaged MCP servers, steering files, and hooks validated by Kiro partners. Developers can execute both data plane tasks (queries, schema management) and control plane tasks (cluster management) in natural language, with dynamic guidance for Aurora MySQL Serverless scaling, RDS-to-Aurora migration, and replication setup. The companion Database Blog post explains how the agent produces the API calls, SQL, and configuration for you to review and run — available via one-click install from the Kiro IDE or webpage.

- Amazon WorkSpaces Applications now supports Windows Desktop OS — You can now bring your own Windows Desktop licenses to Amazon WorkSpaces Applications and stream full Windows desktops and applications from AWS-hosted dedicated hardware. BYOL eliminates OS fees (you pay only for compute and streaming infrastructure), supports eligible Microsoft 365 Apps for enterprise, and gives users a matching experience between local and remote environments — same workflows, shortcuts, and navigation in both.

For a full list of AWS announcements, be sure to keep an eye on the What’s New with AWS page.

Other AWS news

Here are some additional posts and resources that you might find interesting:

- Meet our newest AWS Heroes — May 2026 — An AWS Builder Center post introducing the latest cohort of AWS Heroes recognized for their contributions to the global AWS community.

- AI-native, full-stack web apps with Vercel and AWS databases — Database blog post on the new Vercel and AWS Databases integration, which lets you provision Aurora PostgreSQL, DynamoDB, or Aurora DSQL directly from the Vercel dashboard or via v0. The post also highlights the H0 hackathon with $160,000 in prizes for building full-stack apps on this stack.

- Introducing US-based, US citizen 24/7 technical support for AWS GovCloud (US) customers: Your mission never sleeps. Neither do we. — Public Sector blog post announcing that all AWS GovCloud (US) technical support cases are now automatically routed to US-based, US citizen engineers around the clock — no opt-in required — for government agencies, contractors, nonprofits, and academic institutions running mission-critical workloads.

For a full list of AWS blog posts, be sure to keep an eye on the AWS Blogs page.

Learn more about AWS, browse and join upcoming AWS-led in-person and virtual events, startup events, and developer-focused events as well as AWS Summits and AWS Community Days. Join the AWS Builder Center to connect with builders, share solutions, and access content that supports your development.

That’s all for this week. Check back next Monday for another Weekly Roundup!

-Micah